UX Patterns for AI Explainability and Trust

The best AI UX does not explain everything. It explains the right thing at the right moment so users trust the product and act.

Users do not need your AI to be fully explainable; they need it to be predictably trustworthy.

Most founders get this wrong. They add a giant “Why this result?” panel, dump model reasoning into the UI, and call it transparency. That usually makes trust worse, not better, because raw explanation without context feels noisy, inconsistent, and easy to doubt.

Table of Contents

- Trust is a UX problem before it is a model problem

- The core UX patterns that actually build trust

- When to show explanations and when to stay quiet

- How to design explanation depth without overwhelming users

- The system design behind trustworthy AI interfaces

- What to do next

Trust is a UX problem before it is a model problem

If users cannot predict what your AI will do next, they will not trust it even when the model is technically accurate.

That is the important distinction: accuracy and trust are related, but they are not the same thing. A system can be right often and still feel unreliable if it changes tone, output structure, confidence, or behavior without warning.

This is why explainability should start in product design, not in model research.

- Users trust systems that are legible. They want to know what the AI saw, what it ignored, how confident it is, and what they can do if it is wrong.

- Users do not want a machine learning lecture. They want enough explanation to make the next decision safely.

- Users trust guardrails more than cleverness. A visible fallback, edit path, undo state, or source citation does more for trust than a beautifully phrased model summary.

A useful benchmark here is adoption behavior. According to the Nielsen Norman Group, users tend to overtrust or undertrust AI depending on how the interface presents capability and uncertainty, not just underlying performance because interface framing strongly shapes reliance behavior.

Another benchmark is broader user sentiment. Pew Research found 52% of Americans are more concerned than excited about AI in daily life. That means your default assumption should be skepticism, not enthusiasm.

The design response is simple: make the system easier to verify than to admire.

The fastest way to increase trust in an AI product is not better copy. It is reducing the cost of checking whether the AI is wrong.

We have seen this in practice across AI products. On Harmony.ai, the hard part was not getting the LLM to produce workflow steps. The hard part was making multi-step tool-calling chains feel dependable when one weak intermediate step could poison the final output.

So the product pattern we favor is not “magic first.” It is “inspectable automation.”

That means the interface should expose:

- The input context the model used, because hidden context makes output feel arbitrary.

- The action the model plans to take, because users need to understand consequence before they approve automation.

- The confidence or uncertainty state, because false certainty destroys credibility faster than honest doubt.

- The recovery path, because trust rises when errors feel fixable.

This is the same product logic we apply in early-stage product work more broadly. In MVP Design: What to Keep and What to Cut, the core principle is cutting anything that does not help the user complete a real task. AI explainability should follow the same rule. If the explanation does not help a user decide, verify, or correct, it is interface clutter.

The core UX patterns that actually build trust

The best AI trust patterns are not futuristic. Most of them are familiar product design patterns applied with more precision.

Here are the ones that matter most.

1. Show input provenance

Users should be able to see what the AI based its answer on.

- If the answer came from uploaded documents, show the filenames, timestamps, and relevant excerpts inline.

- If the answer came from user profile data or prior actions, say that clearly in plain language.

- If the answer is generated without verifiable sources, label it as a generated estimate or suggestion instead of presenting it like a fact.

This is one reason retrieval-backed interfaces often feel more trustworthy than pure chat. The output is anchored to visible evidence.

At bytelabs, we learned a version of this on Utkrusht.ai. We built the frontend in Next.js and the backend in Python FastAPI, and one of the core UX problems was streaming LLM responses without blocking the UI thread. Streaming improved responsiveness, but it also exposed a trust issue: users were seeing an answer appear before they understood what context it came from.

The fix was not just technical. We paired response streaming with visible context framing so users could see the campaign inputs and generation basis while the output was arriving. That made the system feel faster and more credible at the same time.

2. Use confidence labels carefully

Confidence is useful only when users know what it means.

Bad pattern:

- A green badge that says “92% confidence” with no explanation of what is being measured.

Better pattern:

- “High match based on 8 similar previous cases.”

- “Low confidence because key account data is missing.”

- “This answer may be incomplete because only 2 of 5 files were processed.”

Concrete language beats abstract probability. Most users do not know how to operationalize a confidence score, but they do understand missing data and evidence quality.

3. Separate facts, inferences, and recommendations

Many AI interfaces blur these together. That is a mistake.

A trustworthy answer should visually distinguish:

- What the system knows from source data.

- What the model inferred from patterns.

- What the product recommends the user do next.

This can be as simple as three labeled blocks. It can also be handled with typography, iconography, or collapsible sections.

The point is consistency. If one section is verifiable and another is speculative, the interface must not style them as if they carry equal certainty.

4. Design an obvious correction path

Users trust AI more when they can steer it.

- Add “edit input” next to generated output, not buried in settings.

- Let users reject, regenerate, or refine a result with one click.

- Preserve version history when outputs affect workflows, approvals, or customer-facing content.

On BeYourSexy.ai, one challenge was the cold-start problem for new users with no history. We solved the recommendation side with embedding-based similarity from onboarding answers, but that only works as a trust-building experience if the user can quickly adjust poor assumptions.

So the right UX pattern in systems like this is not “We know you.” It is “Here is our best starting point, and here is how to tune it fast.” That is far more believable.

5. Match explanation type to risk level

Not every AI action deserves the same treatment.

| AI use case | User risk | Best explanation pattern |

|---|---|---|

| Writing assistance | Low | Short rationale and editable output |

| Product recommendations | Medium | Why this item, what signals were used, and quick preference controls |

| Workflow automation | Medium to high | Step preview, trigger conditions, and approval checkpoint |

| Financial, legal, or health guidance | High | Source-backed evidence, uncertainty disclosure, and mandatory human review |

This is where many startups overbuild. They create one global explanation system for every feature. That is inefficient.

Use shallow explanations for low-risk moments and deep verification for high-risk ones.

When to show explanations and when to stay quiet

Always-on explainability is usually bad UX.

If every screen is loaded with “why,” “how,” “source,” “confidence,” and “limitations,” users stop reading all of it. Trust does not come from volume. It comes from timing.

The right pattern is progressive disclosure.

- Show the minimum explanation needed to support the immediate action.

- Reveal deeper detail only when the user signals doubt, risk, or intent to verify.

- Escalate explanation depth automatically when the action has higher consequence.

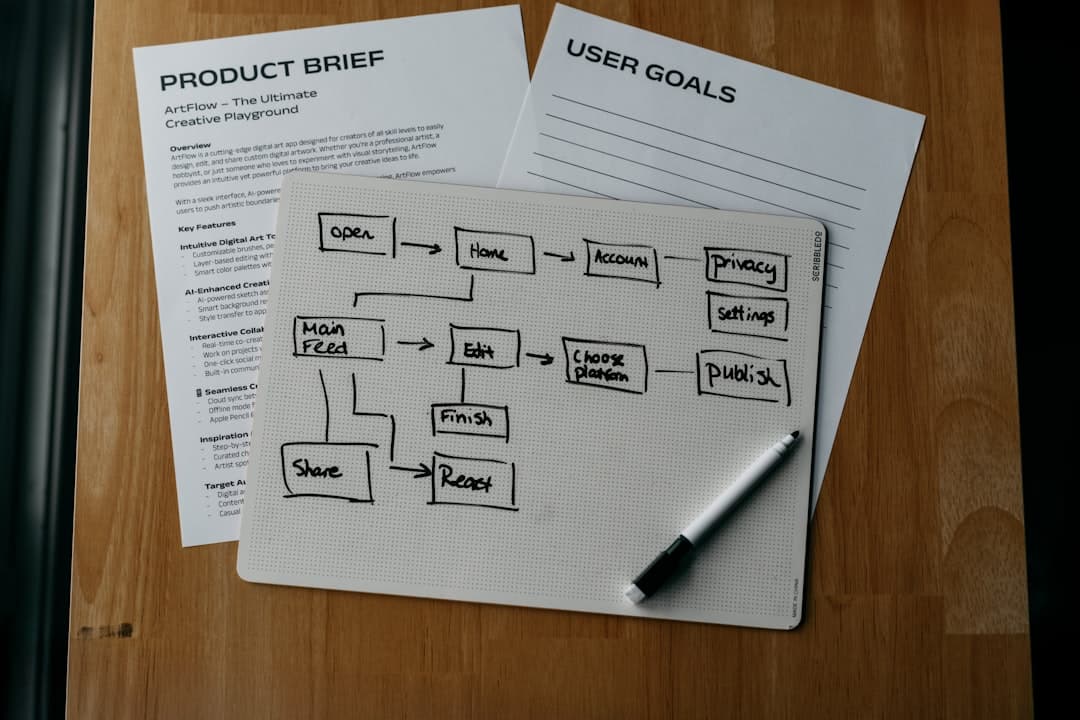

This is the same reason good onboarding works. You do not explain the entire product on screen one. You reveal just enough for the next step. We wrote about this more directly in Mobile App Onboarding Best Practices That Convert, and the principle carries over cleanly to AI products.

A few practical rules work well.

Show explanations by default when:

- The AI is making or suggesting a consequential decision.

- The output relies on hidden inputs the user may not expect.

- The result is likely to conflict with user intuition.

- The system confidence is low or evidence is weak.

Keep explanations collapsed when:

- The task is low-risk and highly reversible.

- The user has already seen this pattern repeatedly and learned it.

- The output is obvious from visible inputs.

- The explanation would repeat the same generic template without adding decision value.

Here is a simple interface rule set teams can actually implement:

function explanationLevel(risk: 'low' | 'medium' | 'high', confidence: number, isReversible: boolean) {

if (risk === 'high') return 'full'

if (confidence < 0.6) return 'full'

if (!isReversible) return 'full'

if (risk === 'medium') return 'summary'

return 'minimal'

}

That kind of product logic matters because explainability is not just content design. It is conditional UX.

A credible talk on this broader challenge is Google's guidance on designing human-centered AI experiences:

Another counterintuitive point: sometimes the best explanation is a delay.

If the model is still gathering context, running tools, or checking sources, saying “Reviewing 3 files and 2 previous actions” is better than instantly displaying a confident-looking placeholder answer. Users trust visible process more than polished speed when the task is important.

We saw a related tradeoff on Harmony.ai. Prompt token count was the biggest cost driver, and we reduced that by caching intermediate outputs. That engineering decision also helped UX because repeated steps became more stable and faster, which made workflow suggestions feel less random. Users often describe consistency as intelligence, even when the real win was architecture, not model quality.

How to design explanation depth without overwhelming users

Most AI explanation UIs fail because they are designed as a single layer. Trust needs at least three.

I recommend a tiered model.

Layer 1: Instant reassurance

This is what the user sees first.

- A short statement of what the AI did.

- A short statement of what it used.

- A clear next action.

Example:

- “Drafted a reply using the last customer message and your support policy.”

- “Low confidence because the refund date is missing.”

- “Add date” or “Send to agent for review.”

Layer 2: Decision support

This is for users who want to verify before acting.

- Show source excerpts, matched examples, or business rules applied.

- Highlight assumptions in plain language.

- Make the confidence explanation concrete rather than numeric when possible.

Layer 3: Full audit trail

This is for admins, compliance-heavy products, and operational debugging.

- Show data sources, tool calls, timestamps, and versioned prompts or rules.

- Record whether a user accepted, edited, or rejected the output.

- Preserve enough detail for post hoc analysis without exposing raw chain-of-thought.

That last point matters. Do not dump internal reasoning traces into the UI just because you can. It often confuses users, leaks implementation detail, and creates new safety issues.

Anthropic’s and OpenAI’s public guidance has pushed teams toward safer summaries and structured justifications rather than exposing raw reasoning directly, and that is the right call for product UX. Your interface should explain the basis of the result, not narrate every hidden token path.

A practical visual hierarchy helps here.

- Put the result first, because users came to complete a task.

- Put the key rationale second, because they need a reason to trust or challenge it.

- Put controls third, because agency matters more than exposition.

- Put full details behind an expansion, because most users do not need them every time.

This is a design systems problem too. If you are building AI features into an existing product, define consistent components for:

- Confidence states.

- Source citation blocks.

- Warnings and uncertainty messages.

- Human review checkpoints.

- Regenerate, edit, and override actions.

Without those components, every feature team invents its own trust language. That inconsistency is expensive.

According to Baymard Institute, nearly 70% of ecommerce sites have serious usability issues in checkout flow patterns. Different domain, same lesson: users drop off when high-stakes interfaces are inconsistent. AI products are often even less forgiving because the system can feel opaque before the user makes a single mistake.

This is also why AI explainability should be part of product scope from day one, not bolted on later. If you are still defining what your MVP should include, Mvp Design What To Keep And What To Cut is the right lens: keep the smallest set of trust mechanisms that make the AI safe to use, and cut the decorative “AI magic” that does not improve outcomes.

The system design behind trustworthy AI interfaces

Trustworthy AI UX is impossible if the underlying system cannot produce stable metadata.

Designers often specify source chips, confidence states, and decision reasons, but engineering cannot render them cleanly unless the backend is structured for it.

That means the product should treat explanation as first-class output, not as an afterthought generated from logs.

A reliable AI response object often needs fields like these:

{

"answer": "Recommend follow-up email sequence B.",

"confidence_label": "medium",

"reason_summary": "The lead matched 6 prior conversions in fintech.",

"sources": [

{"type": "crm", "label": "Lead profile", "id": "123"},

{"type": "campaign", "label": "Previous sequence performance", "id": "seq_8"}

],

"assumptions": [

"Industry classification is inferred from company description."

],

"actions": [

{"type": "approve", "label": "Use this sequence"},

{"type": "edit", "label": "Adjust audience filters"}

]

}

This structure gives the frontend something trustworthy to render consistently.

It also forces a product decision: if your system cannot state why it made a recommendation in a compact, user-readable format, you probably should not automate that recommendation yet.

We made a similar architecture-first decision on Surge. It was a Next.js platform serving thousands of concurrent users with real-time updates over WebSockets. We rebuilt the data layer twice: first with Supabase realtime, then with custom Postgres plus Redis pub/sub because Supabase realtime added 200ms+ latency under load.

That was not just a performance issue. In real-time products, stale or delayed state kills trust because users stop believing what they see. A trustworthy interface is one where explanations, statuses, and underlying system behavior stay aligned.

There is a startup lesson here too.

- If your AI feature touches high-value actions, store the evidence behind each output.

- If users can override the model, log that feedback as product signal, not just analytics noise.

- If you have multiple explanation patterns across the app, standardize them now before inconsistency spreads.

- If the model cannot produce a stable rationale, reduce automation scope until it can.

This is one reason product and engineering decisions should not be split too late in the process. In Development User Stories, we argue that a good story should capture behavior, not just features. For AI trust, that means writing stories like: “As a user, I need to see what evidence informed this recommendation before I approve it.” That creates much better products than “Add explainability panel.”

The most practical trust stack for early-stage teams looks like this:

| Layer | What to implement first | Why it matters |

|---|---|---|

| UX | Source visibility, uncertainty states, edit/override controls | These directly affect user trust and action rate |

| Product logic | Risk-based explanation depth and approval thresholds | These stop overexplaining low-risk tasks and underexplaining high-risk ones |

| Backend | Structured rationale fields and source metadata | These make trust patterns consistent instead of ad hoc |

| Analytics | Track accept, edit, reject, and override behavior | These reveal where trust is breaking in real use |

If you skip one of these layers, the system feels fake. The interface says “trust me,” but the product cannot support that promise.

What to do next

Audit one AI workflow in your product this week and remove every explanation element that does not help a user verify, decide, or correct.

Then add exactly four things:

- One plain-language statement of what the AI used.

- One visible uncertainty or confidence cue that explains itself.

- One obvious correction or override action.

- One deeper inspection path for high-risk moments.

Do not start with a giant explainability dashboard. Start at the decision point where trust is currently breaking.

If you are still shaping the feature, define the explanation contract before you finalize UI. Decide what evidence the system will expose, what assumptions it can admit, and when human review is mandatory. That work will improve both design quality and engineering clarity.

The real goal is not making AI feel smart. The goal is making users feel in control when the AI is useful and cautious when it is not.

If you're at this stage, schedule a call with us.